[Note: I’ve made edits and corrections based on very helpful feedback by Eric Gray and George Hicken and new information that put out by VMware after this post was initially published. Any errors are my misinterpretation. – 01/23/2016]

VMware recently announced several technologies and initiatives related to containers and their use in building cloud-native applications. These announcements seem to have been targeted at traditional VMware customers who may be looking at new technologies such as Docker but are wary of moving away from a trusted vendor. Initiatives such as vSphere Integrated Containers and Photon Platform are designed to give these customers an alternative path to follow if they want to be begin building cloud-native applications.

However, with the slew of new technologies and terms that VMware has introduced come new challenges for your traditional VMware administrators, architects, and consultants who are responsible for navigating or helping others navigate this new cloud-native landscape. This blog post is an attempt on my part to make sense of these new initiatives by comparing them with existing offerings.

vSphere Integrated Containers

The first initiative I want to look at is vSphere Integrated Containers (vIC), VMware’s evolutionary approach to containers. The concept behind vIC is that, according to VMware, a container is essentially “a binary executable, packaged with dependencies and intended for execution in a private namespace with optional resource constraints” and a container host is “a pool of compute resource with the necessary storage and network infrastructure to manage a number of containers.” According to VMware, If you accept this premise, then it matter little what actually constitutes a container or a container host as long as developers can access these resources using standard container APIs such as the Docker APIs.

vIC, which grew out of Project Bonneville, is a deconstruction of container technologies into its fundamental capabilities and then replicating those capabilities using technologies in the VMware portfolio such as ESXi, Photon OS, and Instant Clone. It is being positioned as a solution that bridges traditional vSphere infrastructure with containers by allowing VMware admins to manage both these special type of containers using familiar VMware tools such as vCenter.

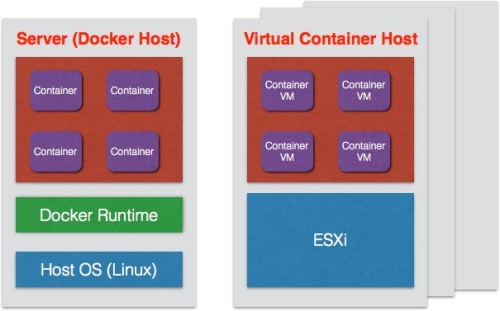

Take a look at the diagram below to see the comparison between a Docker container and a vSphere Integrated Container:

In the vIC construct, the ESXi hypervisor replaces a Linux server as the Docker container host OS. To replicate Linux kernel isolation mechanisms, such as namepaces and cgroups, to create containers, vIC leverages ESXi hardware virtualization mechanisms to create container VMs. To match the speed with which a Linux container can be booted up vs. a traditional vSphere VM, vIC leverages Instant Clone technology to be able to spin up its new container VMs. Instant Clone takes a VM with a pico version of Photon OS running and creates a 0 overhead copy called a Just enough VM (JeVM). This JeVM is a new container VM and it shares the memory of the parent VM. When changes are made to the memory pages, a copy-on-write operation is performed to create a new memory page for the child container VM. This process is repeated each time a new container is needed.

One of the stated advantages of vIC is the ability to managed the container host using exiting tools such as vCenter since the container host is basically just an ESXi host or vSphere cluster. This means that vIC can take advantage of advanced vSphere capabilities such as HA, vMotion, and Distributed Resource Scheduling (DRS). This, I believe, is what is enabling an abstraction called the virtual container host (VIH). VMware defines VIH as “a Container endpoint with completely dynamic boundaries. vSphere resource management handles container placement within those boundaries, such that a virtual Docker host could be an entire vSphere cluster one moment and a fraction of the same cluster the next.” While this may seem confusing to some, my understanding is that this merely a way to express that fact that capabilities such as DRS will allow the Container VMs to freely move between ESXi hosts in a vSphere cluster. It would be similar to calling a vSphere cluster that is hosting traditional virtual machines a virtual VM host.

As a container endpoint, a VIH is also the mechanism that exposes the Docker APIs to developers who then can interact with vIC in the same manner that they can with Linux based Docker containers. Meanwhile, a VIH can be managed and the vIC instances it hosts can be managed through a vSphere web client in a similar way that traditional vSphere resources can be managed today.

A summary slide of vIC was helpfully provided to me by Georg Hicken from VMware; please see below:

Photon Platform

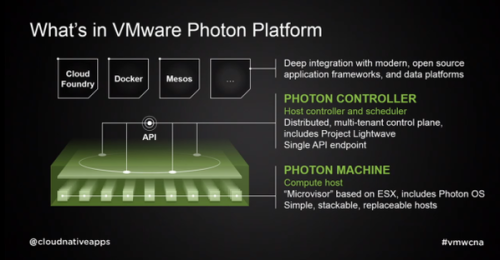

If vIC is the evolutionary solution for customers transitioning from traditional VMs to containers, then Photon Platform is VMware’s revolutionary solution for those who are going all in with containers and container management systems such as Kubernetes or Mesos. Photon is architected to provide the type of scale and speed being trumpeted by vendors who are advocating for “Google-Style” Infrastructures in the datacenter. VMware is looking to accomplish this in Photon by replacing the traditional ESXi hypervisor with a new lightweight “microvisor,” containers as units of application delivery, and management of the stack using a new control plane, called the Photon Controller, that is optimized for container management.

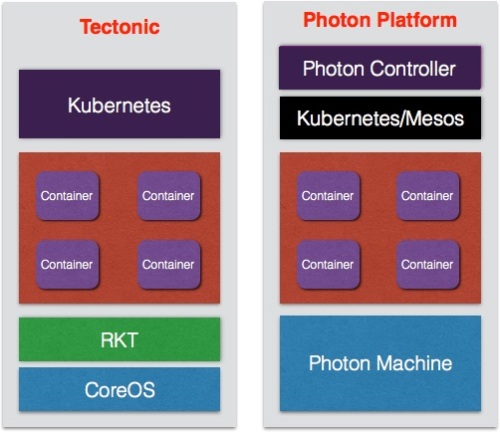

A good way to begin getting a handle on the Photon Platform is by comparing with another container infrastructure, such as CoreOS’ Tectonic Platform.

Starting with the Photon Machine layer, you can see that the Photon Machine, which is a new ESXi based “microvisor combined with Photon OS, provides the container host OS and container runtime. This can be confusing at first since in the Tectonic stack, the Linux-based minimal OS called CoreOS is considered the container host OS and is differentiated from their container runtime, which is typically RKT but can also be Docker. In VMware’s literature, however, they seem to treat the microvisor as the container host OS and call Photon OS the container runtime. This is an area I would like to have better understanding of the technology.

Moving up the stack, the Photon Controller is a distributed control plane and resource manager that is intended to be used to manage a fleet of Photon Machines. The Photon Controller should not have the scalability limitations of a monolithic controller like vCenter. This is one of the reason VMware themselves pitch vIC, which will be managed by vCenter, as a container solution for moderate scale and Photon Platform as the solution for large scale container infrastructures.

As the diagram above shows, the Photon Controller is being positioned as a uber-manager for container management/resource scheduling systems such as Docker Machine/Compose/Swarm, Kubernetes, and Apache Mesos. In other words, you would use Photon Controller to provision and manage Kubernete and Mesos clusters while the the latter container management systems would manage their owns pods or nodes. An analogy might be vRealize Automation (vRA) managing different vSphere clusters where the ESXi hosts in the clusters are themselves managed by vCenter instances. The Photon Controller is being bundled with Project Lightwave to provide identity access management and future plans are to include other capabilities and plugins to enable the Controller to be used for infrastructure provisioning, monitoring, and management.

Summary And Additional Resources

VMware are making some bold moves in their quest to remain relevant in a container-centric cloud-native future. While many are quick to dismiss VMware as a legacy company that will be left behind, it is important to remember the VMware customer base will likely be moving to containers cautiously. With vIC and Photon Platform, VMware has solutions that they can offer customers to help with that transition at whatever pace is appropriate for a specific customer. There is no guarantee though of success for VMware in this new cloud-native world where open source software reigns. They’ve taken some positive steps such as creating a cloud-native apps team and open sourcing their Photon Controller. However, it remains to be seen if VMware get it right and prove that they are not just paying lip service to open source. In any case, they should not be ignored or discounted.

Meanwhile, I encourage readers to look at the resources below to learn more about vIC and Photon Platform:

![[SCM]actwin,0,0,0,0;Zoom Player zplayer 9/2/2015 , 9:06:28 AM](https://varchitectthoughts.files.wordpress.com/2015/09/150831-vsphere-int-container-diagram.jpg?w=500&h=267)

Thanks for putting time into making sense around the marketing. I see the position that VMware is taking, but given all of the functionality that is built into Kubernetes and Mesos I’m failing to see how the VMware offerings are apples to apples, even the Photon Platform. For example, do the VMware solutions have a service registry? How about name services? Also, are they building their own API so in a DevOps model we’ll need VMware specific tooling to instantiate containers? Or will we be forced to use the already ops-centric tooling of the vRealize suite? Many questions to be answered still.

Chris,

My understanding is that vIC will be Docker API compatible northbound to developers but use vCenter APIs and vCenter tooling southbound to the vSphere Admin. In the case of Photon, the controller will be a meta-manager that sit above container managers like Kubernetes and Mesos.

Ken

You may be interested in this vSphere Blog article on the two new cloud-native infrastructure approaches: https://blogs.vmware.com/vsphere/2015/08/how-to-choose-the-best-infrastructure-stack-for-your-cloud-native-applications.html

Eric,

Thank you. I did take a look a the blog post and tried to incorporate the information into my post.

Ken

Here is a recent post on vSphere Integrated Containers

https://blogs.vmware.com/vsphere/2015/10/vsphere-integrated-containers-technology-walkthrough.html

VIC does not use kernel namespaces, cgroups or any other Linux isolation mechanisms in the containerVMs; you will make use of these only if you choose to run docker within a VIC container. VIC uses hardware virtualization mechanisms to provide isolation between containers, not OS mechanisms.

The Linux mechanisms that docker depends upon have equivalents in the vSphere infrastructure which are leveraged instead, allowing for containers based on operating systems other than Linux, irrespective of OS support for layered filesystems, process isolation, et al.

Photon Pico is the kernel that’s in VIC Linux containers, but the root filesystem of those containers is the docker image used to created it, without any Photon or Linux distro portions. The only thing you get beyond the kernel are the associated kernel modules to permit for demand loading if required.

The operational mapping in the diagram mentioned below should imply it, but it’s essentially dual mode management once the container is created, via vCenter and whatever Docker framework or client is desired.

Some additional references:

A pair of blog articles by Ben Corrie: https://blogs.vmware.com/cloudnative/author/ben_corrie/

Diagram of high level VIC structure: https://twitter.com/hickeng/status/641313044014964737

Windows teaser tweet: https://twitter.com/bensdoings/status/638973960680402945

George,

Thank you for the clarification.

Ken

Hi ,

I have few question wrt to VIC

As mentioned its still hardware virtualization at ESXi level , and for the container running within the Instant Clone VM, We would be having a docker engine running on each container VM ? is that a correct understanding or there is only one docker engine in the hypervisor and it would be having the container created in each of the clone VM ?

There’s only one docker daemon per Virtual Container Host, which runs in an appliance VM, not the hypervisor (the Docker API box in this diagram: https://twitter.com/hickeng/status/641313044014964737). A Virtual Container Host consists of:

1. the appliance VM (serves the Docker API)

2. the resource pool it’s running in

3. a distributed port group for the bridge networking (or just a vSwitch if no VC but single ESX host instead)

When deployed in vCenter a Virtual Container Host spans the entire cluster. The last half of this talk demos both vCenter and ESX deployment scenarios: https://www.youtube.com/watch?v=XkFQw8ueT1w

They are still somewhat sparse, but there’s architecture docs here: github.com/vmware/vic

George,

Thank you for jumping in and clarifying.

Ken

[…] Sorting out VMware's Container Technologies […]